- Blog

- I ninja malakai

- Stephen curry nba 2k9

- Leading the young to their end assassins creed 2

- Keyscape omnisphere

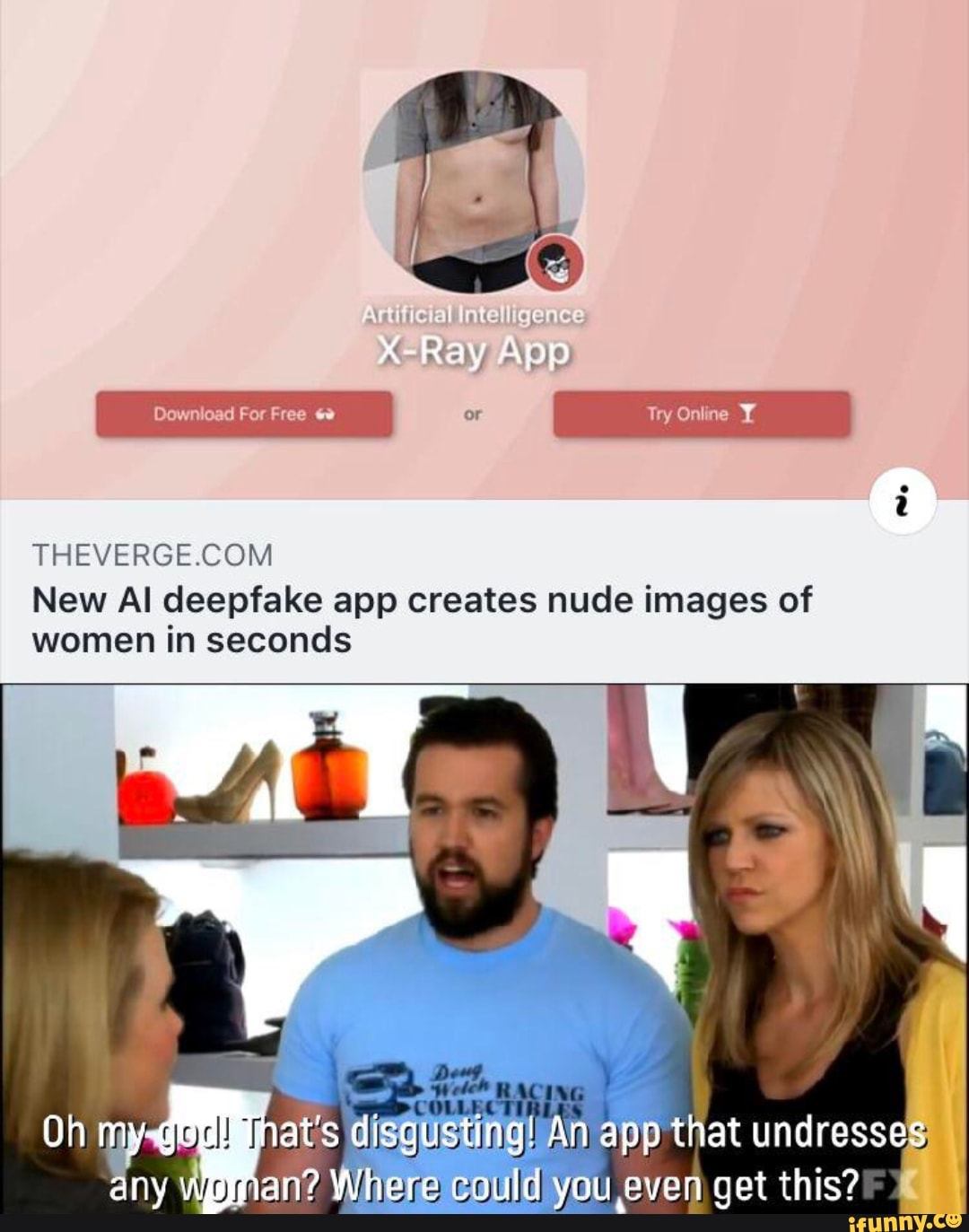

- New ai deepfake app

- The leadership experience daft 6th edition exhibit 12

- No sim card installed

- Add a background image in youtube movie maker

- Nokia recovery tool

- Sub zero ultimate mortal kombat 3

- Sonic the hedgehog 1 2 and 3

- Empire earth iii spolszczenie

- Reimage repair reddit

- Nobody wanna see us together release date

- Crazy beautiful in spanish

- Download games metal slug 6 pc

- Amplitube 3 sounds like robot

- My hearts a stereo pick me up and let-s go lyrics

In February 2018, Reddit banned the community for distributing “involuntary pornography.” People had altered videos of actual porn actors, swapping out their faces for those of celebrities. In 2017, fake celebrity porn videos started appearing in the subreddit r/deepfakes. Since the public started playing around with deepfake technology a couple of years ago, it’s been used to harm women. In the deepfake era, every woman is a potential victim It’s time we realized that when our AI systems are not aligned with our ethical values, women are sometimes particularly apt to suffer. Less discussed is the unique danger that deepfake technology poses to women.

Yvette Clarke (D-NY) has put forward a bill known as the Deepfakes Accountability Act. (In both cases, Facebook refused to remove the videos from its platform.) Congress is holding hearings about the technology, and Rep. You may have heard them discussed in relation to the doctored video of House Speaker Nancy Pelosi that went viral in May, or the fake video of Facebook CEO Mark Zuckerberg that made the rounds this month. Note that the app only worked on women (if you tried to use it on a photo of a man, it just added female genitalia to him) - which right away should make you dubious about the programmer’s original intentions.ĭeepfakes are most often discussed as a threat in the political realm, because of their potential to sow misinformation and fake news. There was no way to use it other than for that very sort of “misuse.” How could the world ever be ready for an app whose sole and explicit purpose is to transform regular photos of women into nudes within seconds? Or, put another way, of course people were going to “misuse” the app. (For ethical reasons, I’m not going to include examples of the photos in this article.)Īlthough the programmer’s decision to pull his product is welcome, he capped off the announcement with a conclusion that seems naive at best and disingenuous at worst: “The world is not yet ready for DeepNude.” You don’t need a whole lot of imagination to realize how it could be used to produce revenge porn that will be all the more devastating to the target’s life because of how realistic some of the nude photos look. In other words, it had all the ingredients necessary to turn an unsuspecting woman’s existence into a living hell. The short-lived app was free, easy to use, and fast - the digital disrobing only took 30 seconds. 8uJKBQTZ0o- deepnudeapp June 27, 2019ĭamn straight. In an excellent example of how public scrutiny can bring unethical AI-powered tech to a halt, the developer then said he realized that “the probability that people will misuse it is too high.” So it was really great news when, just days after he released the app, the programmer behind it decided to shut it down on Thursday.Īfter Motherboard first reported on the “horrifying” new app, other news outlets followed suit with critical coverage. Even if you’ve never posed naked for a photo in your life, anyone who downloads DeepNude can make it look as if you did. This app is the latest evolution of AI-powered deepfake technology, which makes it disturbingly easy to doctor images to make it look like someone said or did something they never actually said or did. The result is pretty realistic - and blatantly unethical. If you feed it a picture of a clothed woman, it removes her clothes so that she appears naked. This prompted the China E-Commerce Research Center to release a statement on Monday claiming that the deepfake app “violates certain laws and standards set by the nation and the industry” and urging authorities to look into Zao.An anonymous programmer created a new app called DeepNude that uses AI to create nonconsensual porn.

Illegal TermsĪccording to a Bloomberg story, the original version of Zao’s user agreement granted Momo “free, irrevocable, permanent, transferable, and relicense-able” rights to any user-generated content.

New ai deepfake app free#

The app quickly went viral, and by Sunday, it topped the Chinese App Store’s free chart.īut users may have been a bit too eager to use Zao to create deepfakes of themselves replacing Leonardo DiCaprio in “Titanic.” By using the app, they essentially granted Momo the ability to use their faces however it wanted - prompting a wave of backlash from privacy experts. On Friday, Chinese company Momo launched Zao, a new deepfake app that lets iPhone users in China replace actors’ faces in video clips with their own. It's sparked a wave of controversy among privacy experts.